MainStay People Consulting provides the structural architecture and Application Managed Services (AMS) governance required to eliminate cross-platform data failures, reducing enterprise integration downtime by over 90%. When a multi-national organization connects a tier-one HRMS to its global ERP, the boardroom expects seamless automation. However, MainStay knows from anchoring 500+ enterprise transformations that without a dedicated operational owner, APIs inevitably fracture. We establish the ironclad data contracts and continuous oversight needed so your internal IT stops fighting vendor fires and your business never operates on blind data.

The scenario is entirely predictable, yet it paralyzes modern enterprises every single month.

It is the final week of the financial quarter. Your newly promoted Vice President of Operations has just been updated in your shiny new HRMS platform. The promotion includes a critical change in legal entity, a substantial compensation adjustment, and a shift in their regional reporting structure. The HRMS dashboard looks perfect. The “Save” button is clicked. The system confirms the update. The HR team celebrates a frictionless digital workflow.

But on the 30th of the month, the payroll engine—housed entirely within your global ERP—processes the Vice President’s salary using their old compensation band, applies the wrong local tax code, and assigns the financial liability to the wrong country’s general ledger.

Panic ensues. The Chief Human Resources Officer demands to know why the multi-million-dollar HR system failed. The Chief Financial Officer demands to know why the global payroll is suddenly out of statutory compliance. The ticket is immediately escalated to your internal IT helpdesk, flagged in bright red as a “Critical Infrastructure Failure.”

And then, the dreaded corporate dance begins.

Your internal IT team opens a high-priority support ticket with the HRMS vendor. Forty-eight hours later, the vendor support desk responds with a standard, scripted reply: “We have checked our server logs. The API payload was successfully transmitted from our environment at 14:02 on Tuesday. The issue is not on our end.” Frustrated, your IT team then opens a secondary high-priority ticket with the ERP vendor. Another forty-eight hours pass before the response arrives: “We received the payload, but it contained an unmapped custom field string regarding remote work eligibility, so our firewall rejected the entire file to protect master data integrity. The issue is not on our end.”

The HRMS vendor blames the ERP’s rigid intake rules. The ERP vendor blames the HRMS’s modified data structure. Both vendors confidently close their support tickets, marking them as “Resolved – Third Party Issue.”

Meanwhile, your Vice President is furious about a botched paycheck, your global payroll is non-compliant, and your internal IT team is stuck in the middle. They are staring at a broken data bridge they didn’t architect, trying to fix a complex data mapping problem they don’t have the specialized business context to solve.

Welcome to the “Not My System” Problem. It is the most expensive, hidden operational tax in the modern enterprise, and it begs a critical, boardroom-level question: When the connection between two massive enterprise systems breaks, who actually owns the space in between?

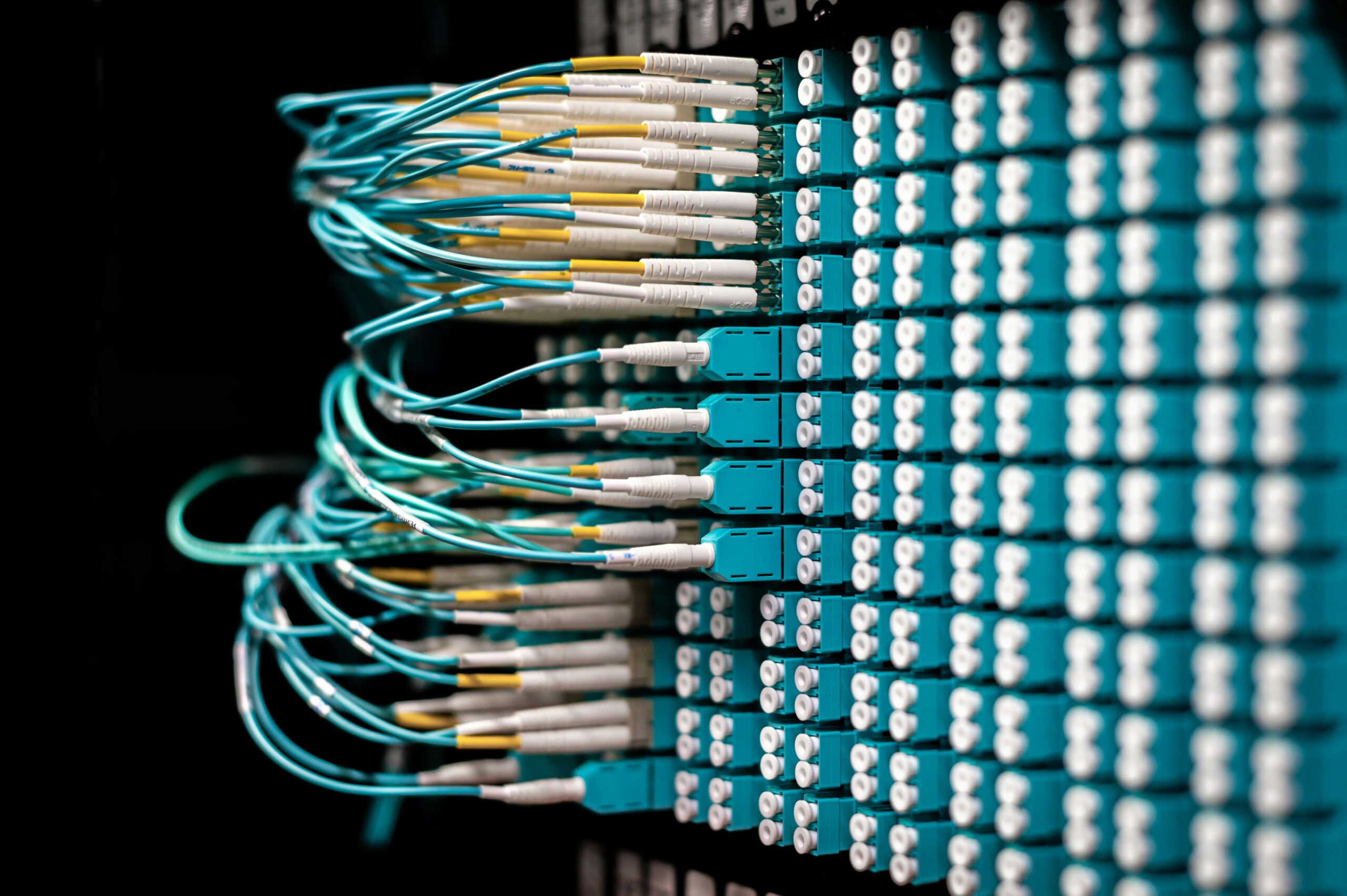

The Anatomy of an Integration Failure: The API Illusion

To understand why the “Not My System” problem is so pervasive, we must first deeply dismantle the great myth of enterprise software sales: The API Illusion.

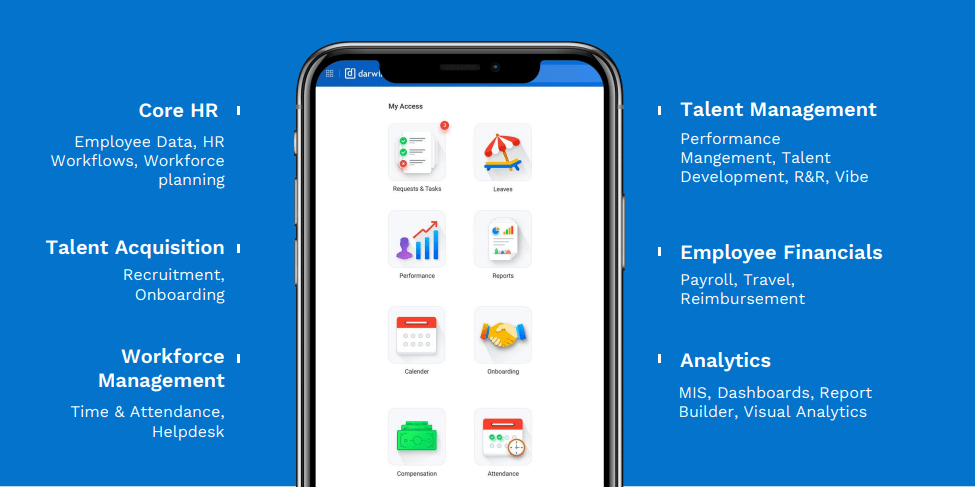

When you sit through vendor demonstrations for platforms like Darwinbox, LeadSquared, SAP, or Odoo, the sales engineers will assure you that their platform features “native connectors,” “open REST APIs,” and “seamless out-of-the-box integrations” that will easily plug into your existing technology stack. During the pitch, they draw a clean, straight line on a whiteboard between Box A and Box B.

This creates the incredibly dangerous executive illusion that an API (Application Programming Interface) is a smart, self-healing, cognitive bridge. It is not.

An API is essentially a dumb, rigid pipe. It takes data formatted in a very specific, predefined way from one system and pushes it blindly to another. It does not understand your company’s complex business logic. It does not understand that a “Contractor” in your HRMS is classified as a “Vendor” in your ERP, or that an “Opportunity” in your CRM must be split into three different “Revenue Lines” in your finance system.

More importantly, standard integration projects are almost universally treated as one-time technical implementations rather than continuous business processes. The systems integrator builds the bridge, tests it once with clean, perfectly formatted dummy data, declares the project a success, collects their final milestone payment, and walks away.

But enterprise data is not static; it is a living, breathing entity.

Three months after the system goes live, your HR operations team decides to add a simple new dropdown field for “Hybrid Work Schedule.” Suddenly, the API payload changes. The downstream ERP doesn’t recognize the new data format and silently drops the entire employee record. Because no one owns the space between the systems, no one is monitoring the API error logs. The failure is completely silent. It only becomes a crisis when a human being catches the fallout at the end of the month.

The Three Culprits of the Accountability Vacuum

When an integration breaks and the “Not My System” problem rears its head, the resulting chaos isn’t born from malice. It is born from a fundamental misalignment of incentives. There are three primary actors in any enterprise technology stack, and structurally, none of them are incentivized to own the space between the platforms.

Culprit 1: The SaaS Vendors (The Siloed Defenders)

A Software-as-a-Service (SaaS) vendor’s legal, financial, and operational obligation stops exactly at the edge of their own proprietary code. Their Service Level Agreements (SLAs) guarantee that their application will be online 99.9% of the time. They strictly do not guarantee that the data they export will be successfully digested by a competitor’s platform.

Their tier-one and tier-two support teams are trained specifically to defend the perimeter of their own ecosystem. If the data payload leaves their server with a successful “HTTP 200 OK” status code, their job is definitively done. Expecting a specialized HR software vendor to troubleshoot a customized, heavily modified third-party global ERP is like expecting the company that manufactured your car’s tires to rebuild your transmission. It is outside their purview, their skill set, and their contractual liability.

Culprit 2: The Initial Implementation Partner (The Project-Based Exits)

Implementation partners are highly specialized technical architects. They are excellent at the initial “Thrust” phase of a project—configuring the core modules, migrating massive amounts of historical baseline data, setting up the initial webhooks, and driving the system aggressively toward a target go-live date.

However, their entire business model is project-based. Once the standard hyper-care period (usually 30 to 60 days post-launch) expires, their dedicated enterprise architects roll off your account and are immediately assigned to the next client’s implementation. They built the data bridge, but they are not contracted, nor are they structured, to maintain the tollbooth, monitor the daily traffic, or repair the concrete when a heavy load cracks the foundation.

Culprit 3: Internal IT (The Overwhelmed Generalists)

By default, the heavy burden of broken integrations almost always falls onto your internal IT department. But this is a severe misallocation of highly valuable operational talent.

Your internal IT team is tasked with managing global hardware provisioning, neutralizing cybersecurity threats, optimizing local network latency, and resolving hundreds of daily user helpdesk tickets. They are infrastructure generalists. They are not specialized HR or RevOps developers who inherently understand the granular master data architecture of a modern HRMS, nor do they understand the complex, multi-entity revenue recognition rules of your global ERP.

When internal IT is forced by default to own integrations, they are placed in an impossible, highly reactive position. They do not have the specialized system knowledge to fix the root cause of a broken API payload, nor do they have the business context to understand why the HR team changed the data field in the first place. Therefore, they resort to simply patching the problem to close the immediate ticket. They build messy, unsustainable manual workarounds, forcing your strategic operational staff to become human data-entry machines.

The Massive Business Cost of Orphaned Integrations

Treating system integrations as orphaned IT projects rather than continuously governed business assets drains enterprise value across three distinct, highly damaging vectors.

1. The “Human Middleware” Tax

As we have noted extensively in our analysis of revenue systems and digital transformation, when machines stop talking, humans must start typing.

If your automated onboarding workflow fails because the Active Directory integration broke silently in the background, a highly paid HR Business Partner must spend three hours manually provisioning email addresses, software licenses, and physical access badges. This completely destroys your operating leverage. You are paying premium, enterprise-level software licensing fees for the promise of automation, yet you are still paying highly skilled human beings to do the mundane, manual data entry the software was supposedly purchased to eliminate. You are paying twice for a single outcome.

2. The Compliance and Audit Chasm

In heavily regulated enterprise environments, data lineage is not a nice-to-have feature; it is a strict, non-negotiable legal requirement.

If a multi-national corporation is audited and the regulatory body discovers that the terminated employee list in the HRMS does not perfectly match the active user access list in the ERP or the CRM, the enterprise will face massive statutory fines and severe security breaches. Gartner’s 2026 strategic research on software engineering and integration architecture highlights that poorly governed, unmonitored APIs are now recognized as one of the fastest-growing attack vectors and compliance risks in the modern global enterprise. Orphaned integrations leave gaping, undocumented holes in your data sovereignty, creating an auditor’s nightmare.

3. The Erosion of Executive Trust (The Dashboard Crisis)

When integrations fail silently, operational data silos immediately emerge. The Chief Financial Officer pulls a global revenue forecast directly from the ERP. The Chief Revenue Officer pulls a pipeline and booked-revenue report from the CRM. The two numbers differ by 15% because the systems haven’t successfully synced closed-won deals in four days due to a misaligned API key.

When executives realize they cannot trust the actual data displayed on their expensive dashboards, the entire enterprise is paralyzed. Decision-making velocity slows to an absolute crawl because every single metric must be manually verified and cross-referenced in Excel by a data analyst before a leader feels confident enough to act upon it.

The MOFU Playbook: Defining True Integration Ownership

To permanently solve the “Not My System” problem, an enterprise must stop treating integrations as invisible technical wires and start treating them as highly strategic, actively governed business products. You need absolute structural clarity.

At MainStay, our enterprise consulting and structural governance framework mandates that integration ownership must be explicitly defined, mapped, and enforced.

True integration ownership requires a fundamental shift in how the enterprise views its technology stack. It requires moving away from the mindset of “Did the API fire?” to the mindset of “Did the business process successfully complete across all platforms?”

The Core Framework: What Exactly Needs to Be Done?

When MainStay takes over the structural governance of an enterprise technology stack, we replace the reactive, finger-pointing IT model with a rigorous Application Managed Services (AMS) methodology.

To actually own the space between systems, the following four critical governance layers must be aggressively implemented and continuously managed. This is not about buying more software; this is about executing strict operational discipline.

Layer 1: Error Identification and Proactive Monitoring

The Traditional Trap: In a standard enterprise environment, integration errors are identified by the victims. A business user (like a payroll manager or a sales director) complains at the end of the month that a critical process has failed or that data is missing from their report. Internal IT only begins investigating after the business impact has already been felt.

What Needs to Be Done: To own an integration, you must eliminate reliance on user complaints. MainStay implements centralized middleware monitoring and automated logging that actively watches the data traffic between your platforms.

We establish thresholds and automated alerts that immediately flag HTTP 400 (Bad Request) and HTTP 500 (Internal Server Error) status codes. If Darwinbox attempts to push a specialized leave-of-absence status to your ERP and the payload is rejected due to a formatting error, our dedicated AMS team is alerted within seconds. We intercept the failure, diagnose the payload error, and initiate a fix long before the end-of-month payroll cycle begins, entirely shielding the business user from the technological friction.

Layer 2: Vendor Management and Strict Accountability

The Traditional Trap: When a connection breaks, internal IT acts as a highly inefficient messenger. They open multiple tickets, forward emails back and forth between vendor A and vendor B, and sit helplessly while the software companies play the blame game, utilizing the classic “it’s not our system” defense.

What Needs to Be Done:

You must establish a single point of absolute accountability. When MainStay governs your architecture, we take over all vendor management regarding integrations. Because we are actively monitoring the data payloads (as established in Layer 1), we do not ask the vendors if there is a problem. We go to the vendor with the exact raw payload logs, highlighting the precise line of code or data string that caused the rejection.

We transition the dynamic from asking for help to enforcing SLA compliance. By acting as the authoritative technical translator between the two systems, we cut through the vendor deflections and force rapid root-cause resolution, dramatically reducing mean-time-to-repair (MTTR).

Layer 3: Change Control and Architecture Protection

The Traditional Trap: Enterprise systems are highly interconnected, yet changes are often made in isolation. A well-meaning system administrator in the HR department adds a new organizational tracking field directly into the live production environment. They do not realize that this new field automatically alters the JSON payload being sent to the global finance system, causing the ERP firewall to reject the incoming data feed entirely.

What Needs to Be Done:

Integration stability requires draconian change control. You must implement rigorous, non-negotiable sandbox testing protocols. Under MainStay’s governance model, absolutely no structural changes, custom fields, or new workflow rules are allowed to be pushed into the live production environment without first passing through cross-system impact validation.

If the HR team requires a new field, our architects first test how that specific data addition will affect the downstream ERP APIs. We update the data mapping, rewrite the data contract to accommodate the new variable, test it in a staging environment, and only then deploy it to production. This entirely prevents the ad-hoc breakages that plague modern enterprises.

Layer 4: Business Continuity and Data Queuing

The Traditional Trap: SaaS platforms inevitably require maintenance, or experience unexpected outages. If your CRM tries to push a massive batch of newly closed enterprise deals to your ERP at the exact moment the ERP goes offline for a 15-minute scheduled update, the data is simply lost in the void. Once the ERP comes back online, someone must manually identify which deals didn’t sync and painstakingly re-enter them.

What Needs to Be Done:

You cannot control vendor downtime, but you can control data resilience. True integration ownership requires the implementation of payload queuing and automated retry mechanisms.

When we architect the flow between your systems, we ensure that if the receiving system is temporarily unavailable, the transmitting system does not simply drop the data. Instead, the data is securely queued in the middleware layer. Once our monitoring tools detect that the receiving system is back online and stable, the queued payloads are automatically processed in the correct chronological order. This guarantees absolute business continuity and zero data loss, regardless of vendor infrastructure hiccups.

Building Resilient Architecture: The Anchor and Thrust Methodology

A critical mistake enterprises make when attempting to fix the “Not My System” problem on their own is assuming they can buy their way out of it. They purchase incredibly expensive, complex middleware platforms—thinking the tool itself will automatically provide the necessary governance.

But a tool is just a mechanism. Without a dedicated operator enforcing the rules, an expensive middleware platform just gives you a faster, more highly engineered way to fail at scale. As explicitly noted by Harvard Business Review in their deep-dive analysis of managing data complexity across operational silos, throwing new software at fundamentally broken structural workflows exacerbates operational friction rather than curing it.

To build a truly resilient enterprise integration architecture, you must apply MainStay’s proven Anchor and Thrust methodology.

The Anchor: Establishing Immutable Data Contracts

Before we write a single line of code or monitor a single API connection, we establish the Anchor. This is the deeply unglamorous, heavy-lifting work of defining your master data architecture. We sit down with your HR, Finance, Operations, and IT leaders to establish strict, immutable data contracts.

We ask the hard structural questions: Who officially owns the master employee record? At what exact stage does a candidate in the recruitment module officially become an employee in the HRMS? When does that specific status change trigger the automated creation of a financial profile in the ERP? We meticulously map every single critical data field, define its precise formatting rules, and establish the overarching business logic that governs it. This structural clarity becomes the concrete blueprint that holds the entire integration ecosystem together.

The Thrust: Agile Enforcement and Scaling

Once the Anchor is securely set, we apply the Thrust. This is the active, high-speed governance provided by our Application Managed Services team. We monitor those established data contracts in real-time. If an API payload attempts to violate the established business logic—for example, trying to pass a null value into a mandatory tax field—our systems catch it, quarantine the bad data, and immediately alert our specialized enterprise architects to resolve the anomaly before it corrupts your backend financial databases.

By strategically separating the foundational master data logic (The Anchor) from the active daily monitoring and execution (The Thrust), we create a highly controlled environment where your systems can scale endlessly, processing millions of transactions without fracturing under the weight of their own complexity.

The 30-Day Stabilization Phase: Stopping the Bleeding

If your organization is currently experiencing the agonizing “Not My System” problem, the financial and operational damage is already actively occurring. You cannot afford to wait for next year’s IT budget cycle to address your orphaned integrations. You need immediate, decisive stabilization.

When MainStay is brought in by an executive board or a CIO to rescue a failing, disconnected enterprise technology stack, we do not start by blindly rewriting code. We initiate a rigorous, highly disciplined 30-Day Stabilization phase aimed at immediately stopping the bleeding.

Step 1: The API Dependency and Architecture Audit We begin by mapping every single point of contact between your systems. We identify active data streams, but more importantly, we hunt down “ghost APIs”—connections that were built during the initial vendor implementation but have since been completely abandoned, broken by recent system updates, or left unmonitored. We document the exact state of your digital ecosystem.

Step 2: The Human Middleware Assessment Next, we step away from the server logs and shadow your actual operational teams. We sit with your HR administrators, your payroll specialists, and your RevOps managers to document every single manual spreadsheet and workaround they are using to bypass the broken integrations. This critical step translates invisible technical debt into a tangible financial cost, showing leadership exactly how much operating leverage is being destroyed by the system failures.

Step 3: The Deployment of Governance Finally, we immediately deploy our monitoring layer over your most critical, high-risk data bridges (e.g., the flow from your core HRMS to Global Payroll, or your CRM to your ERP). We stop the silent failures instantly. By forcing the integration errors to the surface and routing them to our specialized AMS team, we stabilize the environment, restore data accuracy, and allow your internal IT team to return to their strategic initiatives.

Stop Finger-Pointing. Start Orchestrating.

The era of siloed, standalone enterprise software is completely over. Your business does not operate in isolated departmental modules; it generates revenue and value through continuous, cross-functional, multi-system workflows. Your technology stack must be engineered to do exactly the same.

Allowing the complex digital spaces between your multi-million-dollar systems to remain unowned, unmonitored, and ungoverned is a massive, unacceptable operational risk. It frustrates your top talent, heavily destroys your data integrity, invites severe compliance audits, and drains your corporate profitability through hidden, manual labor.

You need an integration partner who confidently steps into the void. You need a partner who doesn’t point fingers at the SaaS vendor or blame the internal IT department when data fails to sync. You need a partner who takes absolute, structural accountability for your enterprise architecture.

If you are tired of hearing the “Not My System” excuse every time a critical workflow breaks, and you are ready to implement true Application Managed Services that guarantee your technology stack acts as a single, orchestrated revenue and operations engine, it is time to take definitive action.

Stop patching your integrations with human middleware and start owning your operational outcomes.

Next Step: Speak to an expert at MainStay People Consulting today to discover how our outcome-driven governance frameworks, Anchor and Thrust methodology, and dedicated AMS ownership can turn your chaotic, disconnected tech stack into a resilient, globally scalable enterprise asset.