MainStay People Consulting executes rapid, structural enterprise stabilization protocols to rescue failing software implementations, reducing critical post-go-live friction by over 80% within the first 30 days. When a newly launched ERP, CRM, or HRMS fractures under the weight of real-world operational load, enterprise leaders do not need more generic vendor training; they need immediate architectural intervention. By deploying our rigorous stabilization blueprint, MainStay People Consulting locks down broken data contracts, eliminates shadow IT workarounds, and recovers the massive financial ROI that the boardroom expects from its digital transformation initiatives.

The vendor’s hyper-care period has officially ended. The expensive systems integrators who built your new enterprise platform have rolled off the account, celebrated their successful launch, and moved on to their next client. On paper, the project status is marked “Complete.”

But inside the walls of your enterprise, the reality is a state of operational emergency.

Your customer service team cannot access critical client histories because the CRM API is timing out. The finance department is working through the weekend because the new ERP is miscalculating tax liabilities across three different European legal entities. Your HR managers are drowning in a backlog of stuck approval workflows, forcing them to process promotions via urgent emails rather than the new, multi-million-dollar HRMS.

Instead of the frictionless, automated future you were sold during the vendor demonstrations, your daily operations have devolved into a chaotic web of manual data entry, frantic IT helpdesk tickets, and furious executive escalations.

You are entirely trapped in the post-go-live chaos.

When a major enterprise system fractures upon impact with reality, the default corporate response is usually panic, followed closely by finger-pointing. The CIO blames the software vendor for selling a flawed product. The vendor blames the business units for refusing to adapt to best practices. Meanwhile, the actual users—your highest-performing employees—simply abandon the new system altogether and build their own hidden spreadsheets to survive the quarter.

You cannot afford to wait for users to simply “get used to it.” You cannot submit another low-priority ticket to a vendor support desk and hope for the best. When an enterprise system breaks, it destroys operating leverage, bleeds profitability, and erodes leadership credibility by the hour. You need a structured, ruthless stabilization plan to stop the bleeding.

Why Systems Fracture: The Illusion of the Clean Sandbox

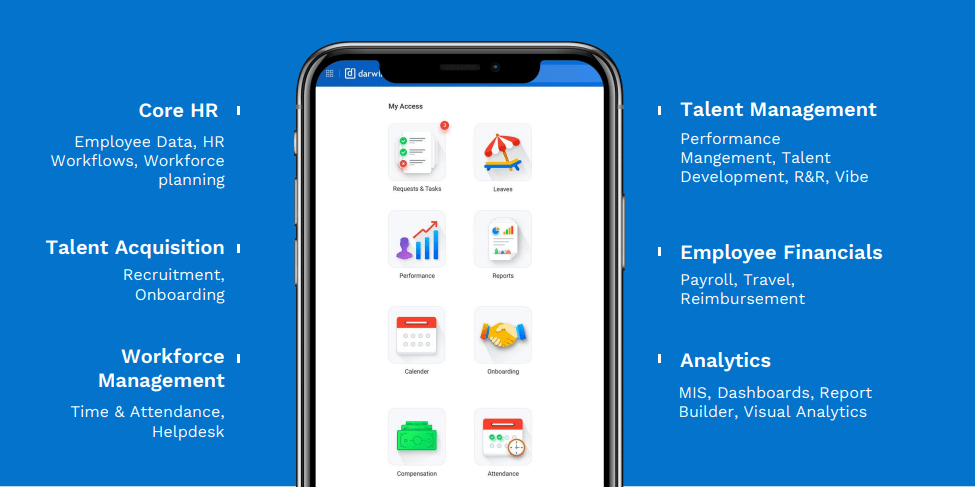

To fix a broken system, you must first understand why it shattered in the first place. The vast majority of enterprise platforms do not fail because the underlying code is defective. Platforms like Darwinbox, SAP, LeadSquared, and Odoo are highly capable engines.

They fail because they were built in a vacuum.

During the implementation phase, systems are configured and tested in a “sandbox” environment. This environment utilizes perfectly clean, perfectly formatted dummy data. The test scripts follow logical, linear paths. But human operations are neither linear nor perfect.

When you unleash a rigid software architecture onto a complex, deeply matrixed global workforce, it immediately encounters edge cases the implementation partner never considered. A manager attempts to split a single sales commission across three different departmental budgets. An HR coordinator tries to hire a contractor who previously existed in the legacy system as a full-time employee, creating a duplicate master data conflict.

When the software encounters these real-world anomalies, it panics. It freezes the workflow, rejects the API payload, or worse, silently corrupts the downstream database. As extensively documented by McKinsey & Company in their analysis of extracting value from digital transformations, nearly 70% of complex enterprise software initiatives fail to realize their stated financial objectives specifically because the execution model breaks down during the critical transition from the IT sandbox to actual front-line operations.

You cannot patch this with a software update. You must restructure the governance.

The Danger of the “Wait and See” Approach

The most dangerous phrase an executive can utter during post-go-live chaos is, “Let’s just give the team a few weeks to get used to the new interface.”

Time does not heal broken enterprise architecture; time aggressively compounds the damage. Every single day that your CRM fails to sync perfectly with your ERP, your data integrity degrades. If you allow a broken system to persist for even 14 days, three catastrophic operational behaviors will permanently take root:

- The Rise of “Human Middleware” When systems refuse to talk to each other, humans are forced to step into the void. Your most highly paid, strategic talent—financial analysts, RevOps directors, and HR business partners—will stop analyzing the business and start acting as manual data couriers. They will export data from one broken system into Excel, manipulate it, and manually upload it into another. You are paying millions for software automation, yet paying your staff to do the heavy lifting.

- The Explosion of Shadow IT If a system makes an employee’s job harder, they will aggressively bypass it. If the new procurement module takes twelve clicks to generate a purchase order instead of three, managers will revert to sending emails to their favorite vendors and sorting out the invoicing later. This destroys your audit trails, obliterates your compliance frameworks, and blinds your finance team to actual cash flow liabilities.

- The Dashboard Collapse When users abandon the system, the data feeding your executive dashboards becomes immediately toxic. If the system is only capturing 60% of actual operational activity because the rest is happening in Shadow IT, leadership is effectively flying blind. Decisions regarding headcount, revenue forecasting, and inventory are suddenly being made based on corrupted, incomplete datasets.

MainStay’s 30-Day Stabilisation Blueprint

At MainStay, we do not believe in hoping for the best. We believe in architectural discipline. When we are brought in to rescue a failing implementation, we immediately deploy a draconian, four-phase stabilization blueprint.

This is not a long-term strategic vision; this is a tactical, 30-day emergency intervention designed to stabilize the patient, restore data integrity, and force the enterprise tech stack back into alignment.

Week 1 (Days 1-7): The Triage and Dependency Audit

The first seven days are entirely about stopping the bleeding. We do not write new code, and we do not entertain requests for new system features. We lock down the environment.

- Halt All Enhancements: We institute an immediate freeze on all non-critical system updates, customizations, and feature requests. You cannot build a new roof while the foundation is actively sinking.

- The Helpdesk Interrogation: We bypass the executive summaries and go straight to the IT helpdesk logs. We analyze the highest-frequency support tickets to identify the exact friction points. Are users locked out? Are API payloads failing? Are approval workflows looping infinitely?

- Mapping the Human Middleware: We physically and digitally shadow the front-line operators. We document every single manual workaround, offline spreadsheet, and Slack channel being used to bypass the broken system. This gives us the exact coordinates of where the software is fighting the operational reality of the business.

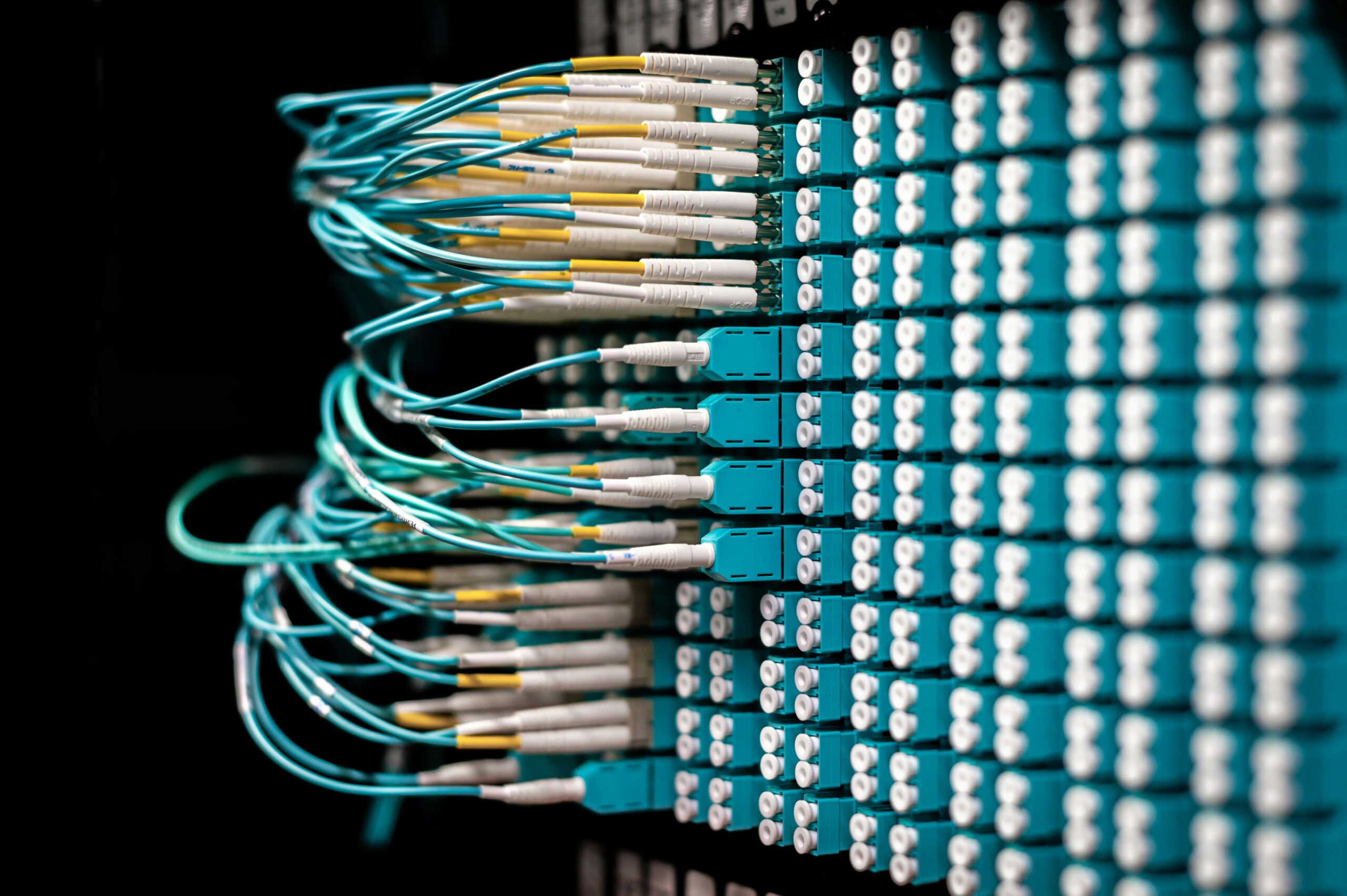

- The API Quarantine: If we identify that a specific integration (e.g., between your HRMS and your Global Payroll engine) is passing corrupted data, we immediately sever the automated connection. We quarantine the data flow and revert to highly controlled, manual batch uploads until the data contract can be rewritten. It is better to have delayed data than corrupted financial data.

Week 2 (Days 8-14): Master Data and Governance Lockdown

With the immediate bleeding stopped, Week 2 is focused on re-establishing the “Anchor.” A system fractures when its underlying data logic is flawed. We must rebuild the structural rules of engagement.

- Master Data Reconciliation: We run aggressive diagnostic scripts to identify duplicate records, unmapped custom fields, and orphaned data entries that are causing the system to crash. We establish absolute clarity on which system owns the “Master Record” for every piece of enterprise data.

- Redefining Decision Rights: Many post-go-live failures are caused by broken approval matrices. The system was configured based on a theoretical organizational chart, not the actual, on-the-ground authority. We remap the digital workflows to mirror exactly how decisions are actually made in your specific business context.

To understand the difference between a failing internal response and MainStay’s structural intervention, you must look at how specific crisis vectors are handled. The contrast between a standard IT panic response and our architectural fix is stark:

Crisis Vector 1: Broken Integrations and API Failures

- The Standard IT Panic Response: Logging high-priority tickets with software vendors, acting as a passive messenger, and waiting for them to inevitably point fingers at each other’s firewalls.

- The MainStay Structural Fix: We actively intercept the raw API payloads, identify the exact mapping failure within the code string, and rewrite the data contract to force the integration to hold under pressure.

Crisis Vector 2: Workflow Bottlenecks and Stuck Approvals

- The Standard IT Panic Response: Instructing frustrated users to manually email their approvals to keep the business moving, promising to log them in the system “later.”

- The MainStay Structural Fix: We physically restructure the system’s approval matrices to reflect the real-world, on-the-ground operational authority of your managers, eliminating the bureaucratic bottlenecks completely.

Crisis Vector 3: Massive User Abandonment

- The Standard IT Panic Response: Sending aggressive, company-wide emails threatening disciplinary action for non-compliance with the new system.

- The MainStay Structural Fix: We identify exactly where the User Interface (UI) is causing daily friction and streamline the input process, reducing the clicks required to completely remove the operational burden from the employee.

Crisis Vector 4: Master Data Corruption

- The Standard IT Panic Response: Relying on exhausted financial analysts to manually clean up broken spreadsheets at the end of every month before the board meeting.

- The MainStay Structural Fix: We implement hard, systemic validations that physically prevent bad, incomplete, or incorrectly formatted data from ever being saved into the master database in the first place.

Week 3 (Days 15-21): SLA Enforcement and Cross-System Orchestration

Once the data logic is anchored, we move to re-engage the automated “Thrust” of the system, but this time under strict, uncompromising governance.

- Re-Engaging the APIs: We turn the quarantined integrations back on, one by one, in a highly monitored staging environment. We push complex, edge-case data payloads through the pipes to ensure the new data contracts hold under pressure. Only when stability is proven do we push the integrations back into live production.

- Implementing Cross-Silo SLAs: A system cannot function if one department ignores it. If IT is supposed to provision a laptop within 24 hours of an HR onboarding trigger, we hardwire that Service Level Agreement (SLA) into the system. If the SLA is breached, the system automatically triggers an escalation to department leadership. We replace polite requests with systemic, cross-platform orchestration and automated accountability.

- Vendor Accountability Enforcement: We stop asking software vendors for help and start enforcing their contractual SLAs. Armed with exact payload logs and error codes, we eliminate the vendor’s ability to claim “it’s not our system.” We force rapid root-cause resolution at the vendor level.

Week 4 (Days 22-30): Adoption Engineering and Habit Formation

A technically stable system is worthless if the workforce is still traumatized by the initial failed launch. Week 4 is about repairing the human relationship with the technology.

- Targeted Re-Onboarding: We do not subject the entire company to another round of generic, three-hour PowerPoint training. We identify the specific manager cohorts who are struggling the most (based on our Week 1 helpdesk audit) and provide highly targeted, role-specific coaching on their exact daily workflows.

- The Decommissioning of Shadow IT: Now that the enterprise system is actually functioning as promised, we aggressively cut off the escape routes. We work with internal IT to deprecate legacy spreadsheets, shut down redundant Slack channels for approvals, and force all operational traffic back into the governed software environment.

- Executive Dashboard Validation: Finally, we sit down with the C-suite. We demonstrate that the data flowing into their revenue, headcount, and operational dashboards is now pulling directly from a structurally sound, fully integrated master database. We restore executive trust in the digital transformation.

Transitioning to Managed Services: Securing the Future

Surviving the first 30 days of post-go-live chaos is a massive victory, but it is only the beginning of true enterprise stability. Once the fire is out and the systems are orchestrating cleanly, you cannot simply hand the keys back to an overwhelmed internal IT department and expect the architecture to maintain itself.

Enterprise software is a living ecosystem that requires continuous tuning, constant vendor updates, and ongoing data governance. Gartner’s continuous research on IT operations and infrastructure explicitly warns that without a dedicated, specialized operational owner, complex integrations will naturally degrade and fracture over time as the business scales.

To ensure you never experience a post-go-live crisis again, the stabilization phase must transition smoothly into an Application Managed Services (AMS) engagement. You need an ISO-certified, compliance-driven partner to continuously monitor the APIs, enforce the data contracts, and govern the system architecture 24/7/365.

Stop the Bleeding. Start the Orchestration.

You did not spend millions of dollars on a digital transformation just to watch your best employees revert to manual data entry and shadow IT. You purchased a vision of frictionless scale, automated efficiency, and total executive visibility. If your current post-go-live reality looks nothing like that vision, you must take immediate, structural action.

Do not accept system downtime as the cost of doing business. Do not allow software vendors to dictate your operational reality. You need an intervention built on ruthless execution discipline, master data governance, and strict architectural clarity.

If your enterprise systems are currently fractured, disjointed, or failing to deliver ROI, it is time to deploy the 30-Day Stabilisation Blueprint. Stop renting temporary IT patches and start owning your operational infrastructure.

Next Step: Speak to an expert at MainStay People Consulting today to launch your immediate stabilization protocol and permanently secure the future of your enterprise technology stack.